This post is part 1 of a 3-part series on the Semiconductor industry - how it began, the emergence of various players and current market dynamics, and new initiatives across the globe to democratise semiconductor manufacturing.

In this post:

Creation of the Integrated Circuit

Silicon Valley & the Rise of Intel

Japan’s semiconductor program

Creation of the Integrated Circuit

Before the integrated circuit was created, electronics engineers would connect circuit components (transistors, capacitors, resistors, diodes) by hand using very thin copper wires. To create larger circuits, more components would need to be connected, increasing the time and labor needed and making the circuits extremely unreliable as breakdown of a single interconnection could make the entire circuit worthless. Jack Kilby (at Texas Instruments) and Robert Noyce (at Fairchild Semiconductor) resolved this ‘tyranny of numbers’ by making all circuit components from a single material on a single block of silicon, and making interconnections using metal wires right on the silicon.

With integrated circuitry, the neat patterns of Boolean logic could be mapped directly onto the surface of the silicon chip; an entire addition circuit would now take up less space and consume less power than a single transistor did in the days of discrete components. With the advent of the chip, the digital computer had finally become as elegant in practice as it was on paper.

T. R. Reid, The Chip

Thus was born the semiconductor industry in the early 1960s, with Texas Instruments and Fairchild Semiconductor at the forefront, holding the most important patents in the history of electronics and computing. The first integrated circuits, launched for commercial use in 1961, were too expensive to be viable competitors for use in electronics and did not receive much attention from the industry. Then US President John F. Kennedy’s clarion call to beat Russians to the moon led to a rapid development of advanced electronic systems for use on space missions. Guidance and Navigation systems aboard the spacecraft required small powerful computers that had to be built using integrated circuit chips. The Apollo program ended purchasing millions of circuits.

The desire to make weapons smaller and more powerful also led to the use of ICs in defence, promoting development and production and helping bring down the cost. Despite the interest from Space and Defence, there was insufficient demand due to the cost. Robert Noyce found a way to address this at an Electronics industry conference in 1965, where he announce that all of Fairchild’s ICs would be price at one dollar, not even recovering the cost of making them. His bet paid off and

Less than a year after the dramatic price cuts, the market had so expanded that Fairchild received a single order (for half-a-million circuits) that was equivalent to 20 percent of the entire industry’s output of circuits for the previous year. One year later, in 1966, computer manufacturer Burroughs placed an order with Fairchild for 20 million integrated circuits.

Leslie Berlin, The Man Behind the Microchip

The chips were all made by hand, mostly by women, who seemed more suited to the delicate handiwork needed. Mechanising the production process was not believed to be very useful since the technology kept changing rapidly and it was much simpler to retrain workers than keep developing sophisticated machinery.

In 1951, there were 4 semiconductor companies in the US, by the early 1960s there were over a hundred. Gordon Moore, then Head of Research at Fairchild Semiconductor was prescient in his 1965 article in Electronics magazine:

Integrated circuits will lead to such wonders as home computers—or at least terminals connected to a central computer—automatic controls for automobiles, and personal portable communications equipment. The electronic wristwatch needs only a display to be feasible today.

But the biggest potential lies in the production of large systems. In telephone communications, integrated circuits in digital filters will separate channels on multiplex equipment. Integrated circuits will also switch telephone circuits

and perform data processing.

Silicon Valley & the Rise of Intel

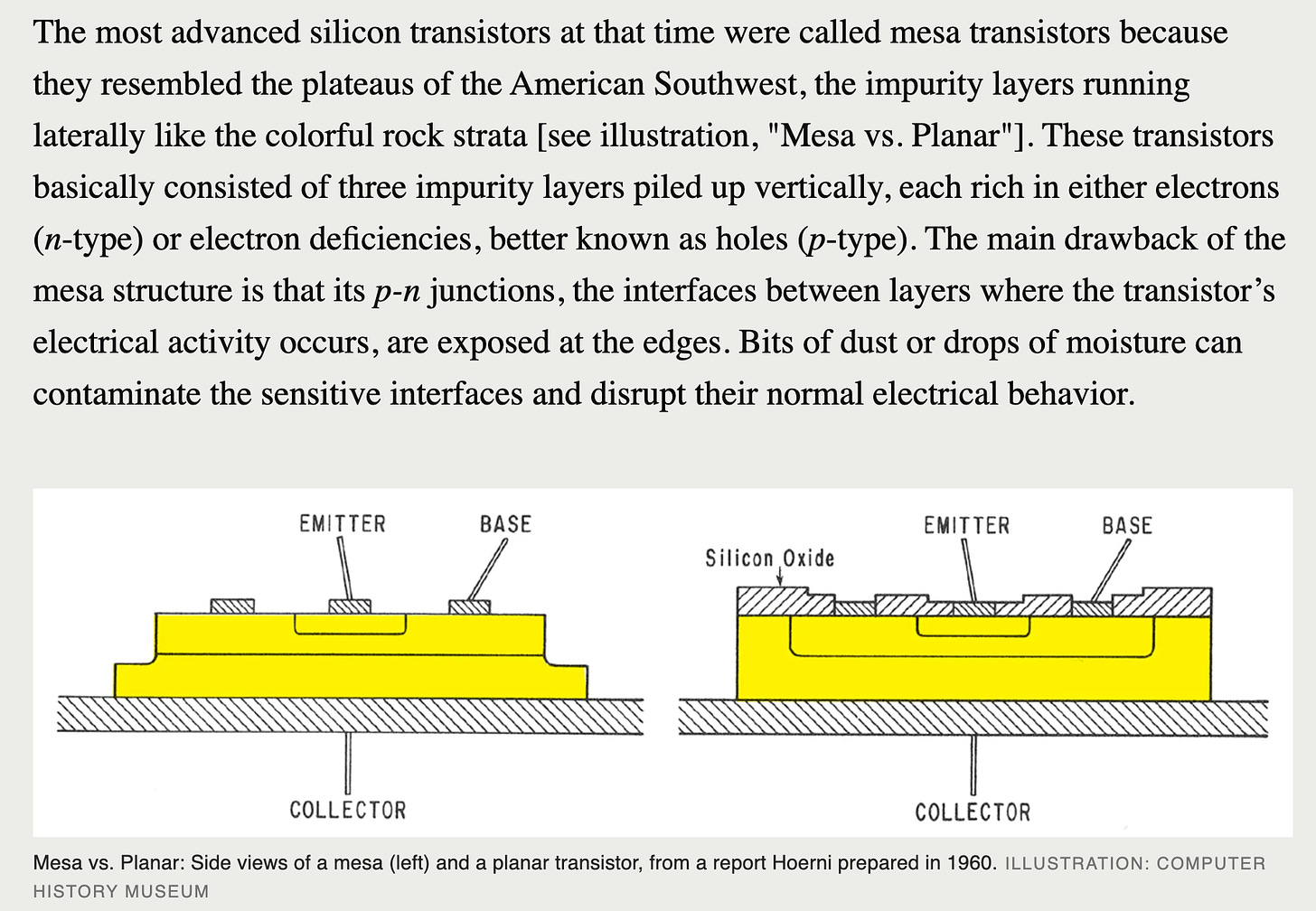

Fairchild Semiconductor, started by the Traitorous Eight - Julius Blank, Victor Grinich, Jean Hoerni, Eugene Kleiner, Jay Last, Gordon Moore, Robert Noyce, and Sheldon Roberts - with the help of Art Rock, had been the crusading innovator of new production processes for integrated circuits. Noyce would setup teams to compete internally on a problem and find the fastest and most practical solution. The planar process, designed by Jean Hoerni, was born in one such contest.

The Fairchilders tested Hoerni’s idea by deliberately leaving the silicon oxide layer on top of the p-n junctions in a mesa transistor. The transistor performed better and the new process improved the reliability of Fairchild’s mesa transistors, which were about to cost the company its first major government contract. Not only did this redeem Fairchild, but also led every other semiconductor firm to license the planar process or risk being wiped out.

After the IC had been created, Fairchild grew rapidly. In 1959, it did half a million dollars in sales. In 1966, this was $130 million. And all the profit was ploughed back to its parent - Fairchild Camera & Instrument - on the East Coast. The largest semiconductor firm in the United States with the brightest minds in the industry refused to offer stock options to its whip-smart engineers and began losing them to competing firms, most to National Semiconductor.

When the situation became unsalvageable and Robert Noyce got tired of taking orders from East Coast suits, Gordon Moore, Robert Noyce and their devoted disciples - most notably Andy Grove - left to start a new company.

Most innovation in integrated circuits had been done to replace ‘logic’ circuits - those that could perform mathematical operations and calculations. The storage of information was done on ferrite core memories. However, a number of experts could foresee the application of semiconductor chips as memories.

Initially incorporated as NM Electronics (for Noyce and Moore), the company was unsure which kind of memory chips it would build - multichip memory modules? Schottky bipolar-type memory? Silicon-gate metal-oxide semiconductor (MOS) memory? The matter was settled when “Multichip memory modules proved too hard and the technology was abandoned without a successful product. Schottky bipolar worked just fine but was so easy that other companies copied it immediately and we lost our advantage. But the silicon gate metal-oxide semiconductor process proved to be just right.”

Intel announced its first product - the 3101 Static Random Access Memory (SRAM) - less than 18 months after being founded. It had all of 18 employees at the time. While the rest of the industry was catching up to this announcement, Intel had developed the first Schottky bipolar ROM (model 3301) and the 1101 SRAM using MOS technology. MOS chips were smaller, denser and used the silicon gate technology that simplified the manufacturing process. Intel was already leading semiconductor innovation with the MOS design, when a contract with the mainframe computer manufacturer Honeywell led to its most revolutionary idea.

Honeywell wanted Intel to fabricate a custom DRAM (dynamic random access memory) using MOS rather than the prevalent bipolar technology. Intel delivered the contract, but unhappy with the performance of the chip, set out to design its own DRAM using MOS. Robert Noyce once again wanted to lead the market in price and technology and set a target of 1 cent per bit for the 1 kilobit DRAM.

The 1kilobit DRAM 1103 was introduced in October, 1970. In 1972, it became the largest selling integrated circuit in the world.

Japan’s Semiconductor Program

There are differing accounts of how IC manufacturing began in Japan. T.R. Reid writes in The Chip:

There were signs during the early 1960s and 1970s that the Japanese had a collective eye on the semiconductor business. The big Japanese electronic firms - outfits like Fujitsu, Nippon Electric Company (NEC), Hitachi and Sony - began buying licenses for every U.S. semiconductor patent they could find; between 1964 and 1970, royalty payments from Japan on U.S. semiconductor patents rose by a factor of 10, from $2.6 million to $25 million per year.

However, another account recounts how Robert Noyce approached Japan’s Ministry of International Trade and Industry (MITI) to authorise his production of semiconductors in Japan. MEITI refused and Noyce then approached an executive at Nippon Electric, who convinced senior management to buy the patent and begin manufacturing ICs. Interestingly, the purchase of the patent granted Nippon exclusive license to the technology in Japan - by doing that “it became impossible for even Fairchild to export [planar technology ICs] to Japan.”

Funded through a national centralised program run by the Japanese government, and driven by Deming’s ideas on high-quality production and the availability of cheaper labour, Japanese firms soon captured a large of the global semiconductor market. This was seen both in provision of chips as well as production of consumer electronics such as digital watches and pocket calculators.

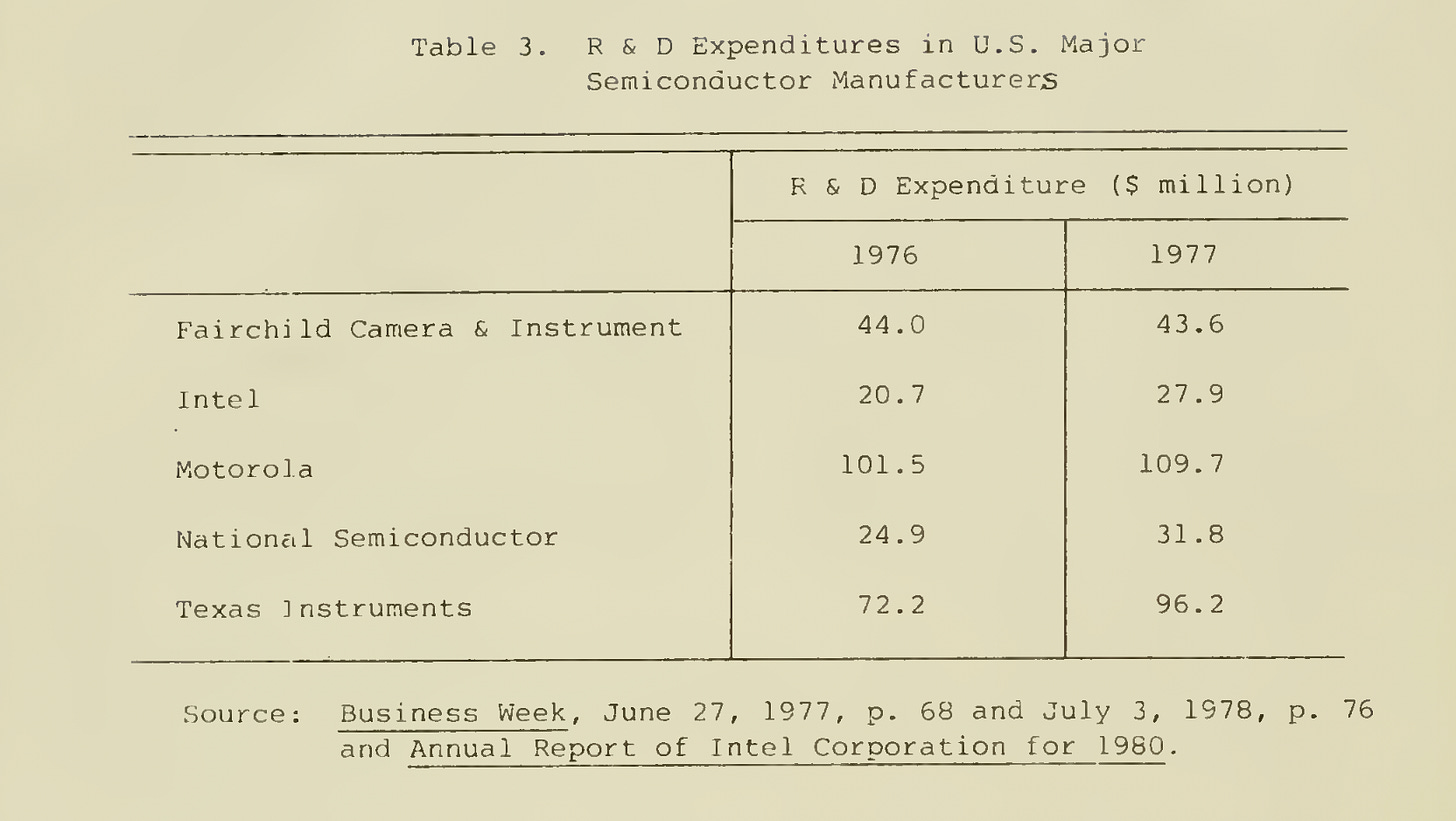

Supported by the MITI, the Vary Large Scale Integrated (VLSI) Technology Research Association was a public-private partnership aimed at developing the technology for VLSI, which was believed to be the next major breakthrough for advanced computing - IBM was expected to launch a new generation of computers employing VLSI circuits latest by 1980. The five large private semiconductor firms in Japan at the time - Fujitsu, Hitachi, Mitsubishi Electric, Nippon Electric Co. (NEC), Toshiba - were all members of the association. Notably, consumer electronics companies - Matsushita Electric, Sony and Sharp - were excluded from this association. A unique aspect of the association was a cooperative laboratory with researchers from all five of the competing firms. Government subsidies for semiconductor manufacturing were tied to cooperation between the competing firms, thus resulting in a highly successful joint R&D effort. In the four years from 1976 to 1980, about 70 billion yen ($283 million) was spent on VLSI research. This was not exceedingly large compared to the semiconductor R&D spend of large US firms during this time.

The Association made more than 1000 patent applications in its four years of existence. Representatives of IBM, Fairchild, Hewlett-Packard, Texas Instruments, Motorola, Siemens, Philips as well as the French and German governments were seen visiting the Association.

The United States semiconductor industry was losing market share to Japanese firms and began lobbying for government funded research and trade reforms to reduce the use of Japanese chips by American companies. The US defense department responded by launching the VHSIC (Very High Speed Integrated Circuit) program.

While the US led the industry in designing the best chips, Asian manufacturers were able to deliver highly reliable, better-performing chips. This claim could no longer be denied by US manufacturers when at Seminar in Washington DC, Richard Anderson, the Vice President of Computer Systems at HP (Hewlett-Packard) observed that Japanese 16-K memory chips were more likely to pass standard factory inspection and computers using these functioned for much longer without memory failure.

And then the United States firms fought back.

For a quick primer on how semiconductor chips became an important electronics and computing invention read Jack Kilby’s Nobel Lecture Turning Potential into Realities: The Invention of the Integrated Circuit. For a delightful extensive read on the subject including how ICs came to exist, The Chip by T.R. Reid is perfect. A complete list of references is present below, but I’d like to highlight The Knowledge Project podcast and Stratechery’s posts that helped build my understanding of this space.

References

Book - The Chip, T.R. Reid

Book - The Intel Trinity: How Robert Noyce, Gordon Moore, and Andy Grove Built the World's Most Important Company, Michael S. Malone

Article - Political Chips, Stratechery

Article - Intel and the Danger of Integration, Stratechery

Article - How TSMC killed 450nm wafers for fear of Intel, Samsung, The Register

Article - Meet Dr. Deming, Corporate America's Newest Guru, T.R. Reid

Article - Chipmaking’s Next Big Thing Guzzles as Much Power as Entire Countries, Bloomberg

Article - Cramming More Components onto Integrated Circuits, Gordon Moore

Article - Intel's Founding, Intel

Article - The Silicon Dioxide Solution, Michael Reordan, IEEE, 2007

Document - Technological Innovation in the Semiconductor Industry: a case study of the International Technology Roadmap for Semiconductors (ITRS), Schaller R., chapter 6-10

Document - Jean Hoerni’s Patent Notebook describing the Planar Process

Document - From Imitation to Innovation: The Very Large Scale Integrated (VLSI) Semiconductor Project in Japan, Sakakibara K., Sloan School of Management , 1983.

Podcast - Semiconductors: Everything You Wanted to Know, The Knowledge Project

Video - Standford Hero Engineering Lecture: Morris Chang in Conversation with President John L. Hennessy, Stanford University

Video - Birth of The Transistor: A video history of Japan's electronic industry. (Part 1), RC286 on Youtube

Video - MOS Capacitor Explained, Jordan Edmunds